Meta-Nets: When AI Builds the Nets That Run AI

The story of building a self-creating Petri net system – and the late-night debugging session where human intuition and Claude Code combined to make it work.

By Alexej Sailer | January 2026

The Recursive Dream

What if you could describe an agentic process in plain English, and an AI would not only understand it but build the entire executable infrastructure? Not just generate some code – actually create places, transitions, connections, runtime configurations, and start the whole thing running?

That’s the dream behind the meta-net: a Petri net that creates other Petri nets. A system that bootstraps itself. An AI infrastructure that can build, improve, and extend itself.

I’ve been working toward this vision for months with AgenticOS. And recently, I reached a milestone – a 12-step pipeline that takes a natural language description and transforms it into a fully executable Agentic Net. But like all ambitious systems, it didn’t work on the first try. What happened next became one of the most illuminating experiences of human-AI collaboration I’ve ever had.

Act 1: Building the Pipeline

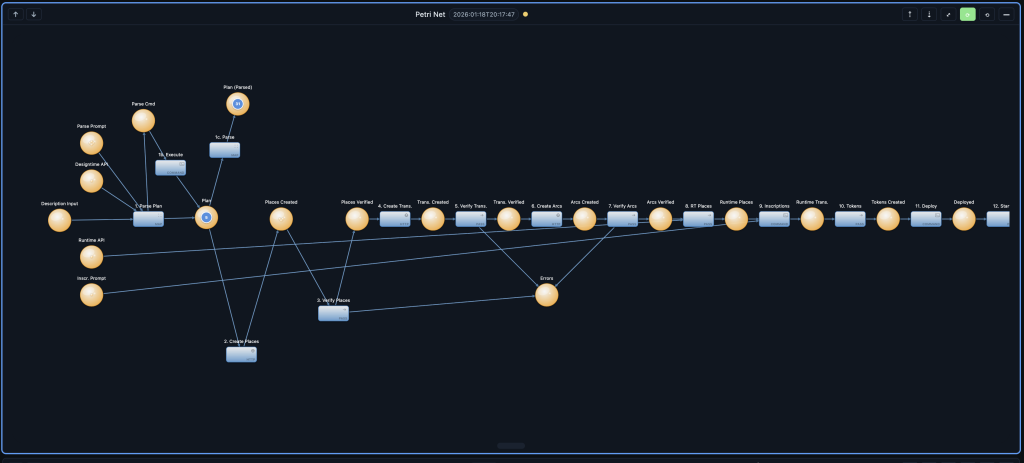

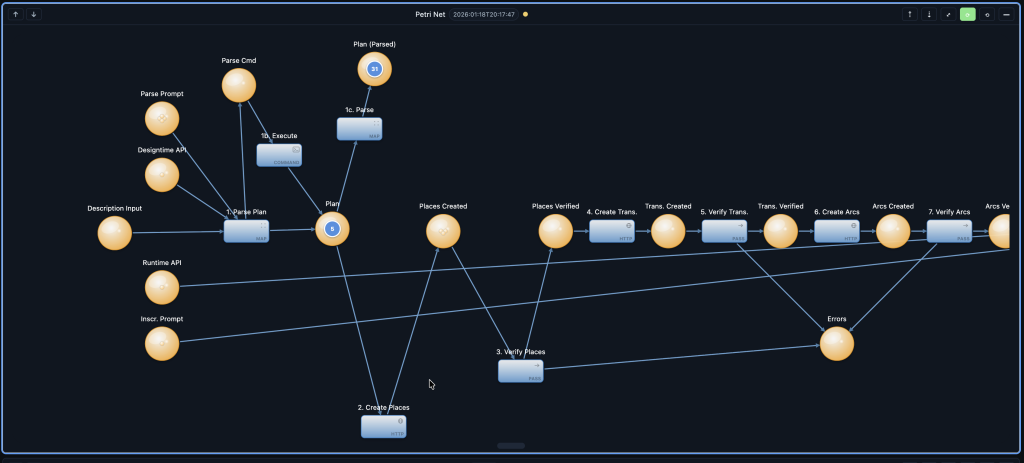

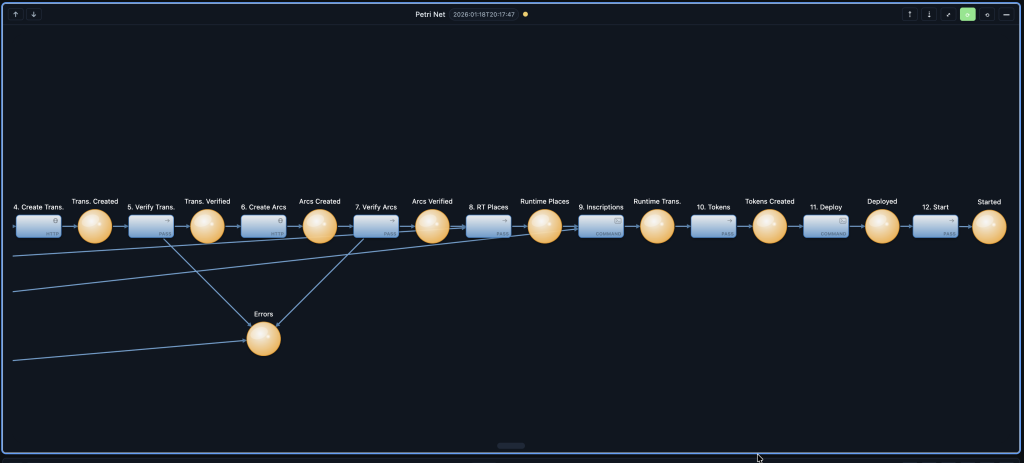

The architecture was elegant, at least on paper. Twelve steps, each handling one specific task:

- Parse the description – Transform natural language into a structured plan

- Create places – Build the visual structure

- Verify places – Confirm they exist

- Create transitions – Add the action nodes

- Verify transitions – Confirm they exist

- Create arcs – Connect everything together

- Verify arcs – Confirm the connections

- Create runtime places – Build the execution infrastructure

- Create inscriptions – Define how transitions actually work

- Create initial tokens – Seed the system with data

- Deploy to executor – Register transitions for execution

- Start everything – Fire it up

The critical piece was step 1: the t-execute-parse transition. This is where Claude Code would receive a description token, think about what Petri net structure it implies, and output a structured plan. The meta-net was, quite literally, using Claude to build other nets.

I worked with Claude Code to create the inscription – that complex JSON configuration that tells a transition what to read, what to do, and where to write. I described what I needed; Claude wrote the JSON. I pointed out edge cases; Claude handled them. Together, we built something neither of us could have built alone.

And then we ran it.

Act 2: The Silence

Tokens flowed into the input place. The transition fired – I could see it in the executor logs. Tokens moved to the output place. Everything looked right.

Except the output was wrong.

"totalCommands": 0,

"success": true,

"batchResults": []Zero commands. The transition consumed the input token, claimed success, but executed nothing. The command token went in, something came out, but the actual command – the Claude invocation that should have happened – never ran.

I checked the logs. No errors. I checked the places. Tokens were moving. I checked the inscription. It looked correct. The system was running, but it was hollow – a pipeline with water flowing through but no work being done.

This is the moment where traditional debugging becomes painful. You’re staring at a distributed system, tokens moving between services, JSON flowing through transformations. Where do you even start?

Act 3: Opening the Conversation

I opened Claude Code. Not to ask for a solution – I didn’t know what the problem was yet. I opened it to investigate together.

“The meta-net pipeline is running but totalCommands is always 0. Tokens are being consumed from p-parse-command and results appear in p-plan-parsed, but no commands are actually executing. Can you help me investigate?”

What happened next was remarkable. Claude didn’t guess. It didn’t suggest random fixes. It investigated.

First, it checked the transition status on the executor – running, 24 successful fires. Then it queried the output place to see what the results actually contained. Then it examined the inscription to understand what the transition expected. Each step informed the next.

Then Claude found something interesting in the token structure:

{

"data": {

"data": "{\"kind\":\"command\",\"id\":\"...\",\"args\":{...}}"

},

"_meta": { ... }

}Do you see it? The command data wasn’t in data – it was in data.data, and it was stringified JSON inside that. The token was double-wrapped.

This is where my role shifted. I wasn’t the one who found the nested structure – Claude did that through systematic investigation. But I was the one who asked the right question:

“So the executor is looking for the command at the top level, but it’s actually buried inside data.data as a string?”

Claude confirmed. The token binding logic in the executor extracted the data field but didn’t handle the case where data itself contained another data field with stringified JSON. The command structure was there – it was just hidden behind two layers of wrapping.

Act 4: The Fix

Now we knew the problem. The solution required changes to two files:

CommandActionExecutor.java needed to unwrap double-nested tokens. Claude wrote a method called unwrapDoubleNestedData that handled two patterns: when the entire token is stringified JSON, and when there’s a nested data field containing stringified JSON.

TransitionOrchestrator.java needed to properly extract the data field when converting token bindings. The original code looked at the _meta structure but ignored the actual data content.

I didn’t write this code. I couldn’t have – not without hours of tracing through the codebase. Claude wrote it, understanding the token flow, the expected schemas, and the parsing logic that needed to change. My contribution was different: I watched, I questioned, I verified.

When Claude proposed the fix, I asked: “What about the case where there’s only one level of nesting?” Claude adjusted. When the first approach had a subtle bug, I noticed it in the test output. Claude fixed it.

This is the dance. Human intuition catches what AI precision misses. AI capability implements what human vision imagines. Neither alone is sufficient.

Act 5: The Moment of Truth

With the fix deployed, we needed to verify. I asked Claude to create a simple test token:

{

"kind": "command",

"executor": "bash",

"command": "exec",

"args": {

"command": "echo Hello from command token"

}

}We placed it in the input place and waited. Three seconds later, the output appeared:

"totalCommands": 1,

"success": true,

"stdout": "Hello from command token"It worked. But this was just an echo command. The real test was whether the meta-net could run Claude Code – using AI to execute AI.

We created another token, this one with a Claude command:

{

"kind": "command",

"executor": "bash",

"args": {

"command": "claude -p 'Output only the text: PETRI_NET_TEST_PASSED' --no-session-persistence"

}

}Thirty seconds of waiting. The transition fired. The result appeared:

"stdout": "PETRI_NET_TEST_PASSED"The meta-net was alive. Claude Code running inside a Petri net, orchestrated by AgenticOS, debugged by Claude Code working alongside a human. The recursion was complete.

What This Collaboration Taught Me

Looking back at this debugging session, I see a clear pattern in how we worked together:

| I Did | Claude Did |

|---|---|

| Noticed the symptom: totalCommands: 0 | Investigated systematically: checked states, queried places, examined tokens |

| Provided context from previous sessions | Traced through code to find the root cause |

| Asked guiding questions | Wrote the fix code |

| Decided to create manual test tokens | Created the tokens and executed the tests |

| Validated that results made sense | Adjusted fixes based on feedback |

This isn’t human-as-supervisor or AI-as-tool. It’s a genuine partnership where each party contributes what they do best. I bring intuition, pattern recognition, and the ability to ask “why does this feel wrong?” Claude brings systematic investigation, code comprehension at scale, and tireless execution of verification steps.

The Recursive Beauty

There’s something philosophically satisfying about what we built. The meta-net uses Claude Code to create Petri nets. We used Claude Code to fix the meta-net. The fix involved understanding how tokens flow through transitions – the very mechanism that will eventually let the meta-net improve itself.

This is the beginning of something larger. Today, the meta-net creates agentic processes from descriptions. Tomorrow, it might analyze its own execution logs and suggest optimizations. Eventually, it could propose improvements to its own architecture – changes that Claude Code and I would review together before deployment.

The dream isn’t just automation. It’s collaborative evolution – humans and AI working together to build systems that become more capable over time, each iteration informed by the partnership that created the last.

For the Curious: Where to Find It

If you want to explore this meta-net yourself, here’s where to look:

- Model ID:

default - Session:

meta-net-v2 - Net ID:

pipeline-12step - Key Places:

p-parse-command(input),p-plan-parsed(output) - Key Transition:

t-execute-parse(command execution)

The fix we made together is now part of the codebase – in CommandActionExecutor.java and TransitionOrchestrator.java in the agentic-net-executor module. The unwrapDoubleNestedData method handles the token structure patterns we discovered.

The Future Is Collaborative

This debugging session lasted perhaps two hours. In that time, we went from “something’s wrong” to “fully verified fix” to “meta-net executing Claude commands successfully.” Traditional debugging – reading through unfamiliar code, understanding token flows, writing and testing fixes – would have taken a full day or more.

But speed isn’t the point. The point is that neither of us could have done this alone. I don’t have Claude’s ability to trace through thousands of lines of code while holding the entire token flow in context. Claude doesn’t have my intuition for when something “feels wrong” or my ability to step back and ask the right question at the right moment.

Together, we built a system that builds systems. And when that system broke, we fixed it together too.

That’s the future I’m building toward: not AI replacing human developers, but human-AI partnerships creating things neither could create alone.

The meta-net is running. Tokens are flowing. And somewhere in the pipeline, Claude Code is waiting for the next description to transform into an executable Petri net – built by the same partnership that keeps the whole system alive.

This article documents a real debugging session. The conversation, the investigation, and the fix all happened as described. The only thing I couldn’t show you is how it felt – the moment when “totalCommands: 0” became “PETRI_NET_TEST_PASSED”.