Agentic Nets: The Future of Human-AI Partnership in Agent Orchestration

How I discovered a new paradigm for working with AI agents by building executable Petri nets together with Claude Code – and why this changes everything about how we develop intelligent systems.

By Alexej Sailer | January 2026

The Moment Everything Changed

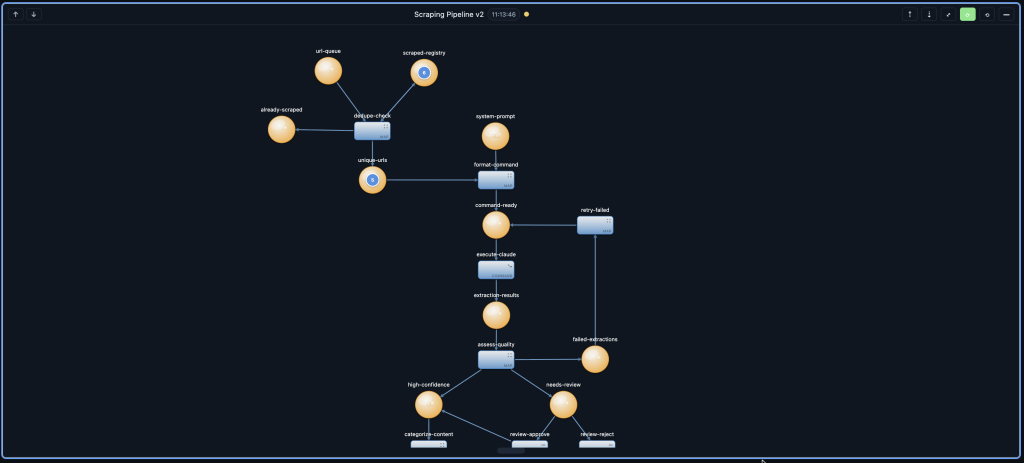

I was sitting at my desk, staring at a complex web scraping pipeline that needed to extract generator specifications from websites, deduplicate entries, execute extraction via Claude, assess quality, and route results based on confidence scores. Traditional development would have taken days of coding, testing, debugging, and refactoring.

Instead, I opened a conversation with Claude Code and said: “Let’s build a scraping pipeline together.”

What happened over the next few hours wasn’t just coding assistance. It was something fundamentally different – a true collaboration where the AI agent and I worked as partners, each bringing unique capabilities to solve a complex problem. Claude didn’t just write code; it thought alongside me, created debug tokens to understand data formats, built complex inscriptions I couldn’t have written myself, and helped me see patterns in the data I hadn’t considered.

This experience crystallized something I’d been developing for months with AgenticOS: we’re entering an era where AI agents aren’t tools we use – they’re partners we work with. And Agentic Nets are the framework that makes this partnership tangible, executable, and infinitely scalable.

What Are Agentic Nets?

Agentic Nets are executable Petri nets that combine the mathematical rigor of process modeling with the intelligence of AI agents. They transform static process diagrams into living systems that can route, transform, integrate, analyze, decide, and execute – all orchestrated through a visual framework that both humans and AI can understand and manipulate.

But here’s what makes them revolutionary: Agentic Nets aren’t just about AI – they’re designed to be built with AI. The framework itself becomes a shared language between human developers and AI agents, enabling a new kind of collaborative development where each party contributes their strengths:

| Claude Code Does | Human Does |

|---|---|

| Creates complex transition inscriptions | Designs the overall agentic process architecture |

| Debugs token formats and data structures | Observes token flow and identifies bottlenecks |

| Writes ArcQL queries for token selection | Analyzes results and extracts insights |

| Handles JSON escaping and syntax details | Structures data into meaningful subnets |

| Fixes host configurations and API calls | Controls execution and makes strategic decisions |

| Understands expected token schemas | Asks the right questions at the right time |

A Real Journey: Building the Generator Scraping Pipeline

Let me take you through the actual journey of building a production-grade scraping pipeline with Claude Code. This isn’t a sanitized tutorial – it’s the real, messy, iterative process that revealed the power of human-AI partnership.

The Pipeline We Built Together

Our goal was to create a data pipeline that scrapes generator specification websites, extracts structured data, and routes results based on confidence scores. The pipeline needed to:

- Accept URLs and deduplicate against already-scraped sites

- Format extraction commands for Claude to execute

- Run Claude with web fetch tools to extract generator specs

- Assess extraction quality and assign confidence scores

- Route high-confidence results to one place, uncertain results to review

Phase 1: I Asked, Claude Investigated

I started by asking Claude to check the current state of the pipeline. Immediately, Claude dove into the data:

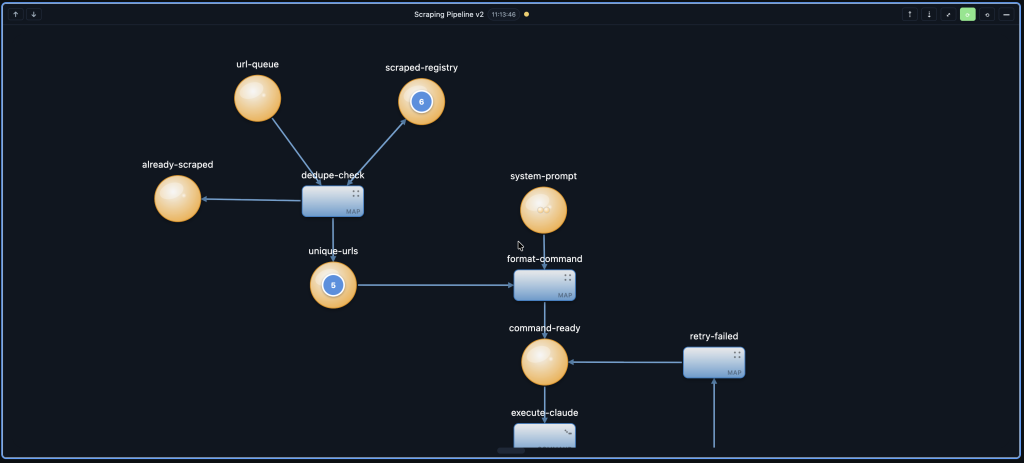

“Let me check what tokens are in each place… url-queue has 0 tokens, unique-urls has 2 tokens, high-confidence has 1 token. The token in high-confidence contains extraction results from a generator forum with confidence 0.3…”

Claude didn’t just report numbers – it read the actual token data, understood the structure, and gave me actionable intelligence. I could see that the pipeline had processed a URL but the confidence was low because the source was a forum, not a specs database.

Phase 2: Claude Creates the Hard Stuff

The heart of any Agentic Net is the transition inscription – a JSON configuration that defines how tokens flow, what queries select them, and what actions transform them. These inscriptions are notoriously difficult to write by hand:

{

"id": "t-dedupe-check",

"kind": "map",

"presets": {

"url": {

"placeId": "url-queue",

"host": "default@localhost:8080",

"arcql": "FROM $ LIMIT 1",

"take": "FIRST",

"consume": true

},

"registry": {

"placeId": "scraped-registry",

"host": "default@localhost:8080",

"arcql": "FROM $",

"take": "ALL",

"consume": false,

"optional": true

}

},

"postsets": {

"unique": { "placeId": "unique-urls", "host": "default@localhost:8080" },

"duplicate": { "placeId": "already-scraped", "host": "default@localhost:8080" },

"register": { "placeId": "scraped-registry", "host": "default@localhost:8080" }

},

"action": {

"type": "map",

"template": {

"url": "${url.data.url}",

"isDuplicate": false,

"checkedAt": "${now}",

"_sourceTokenId": "${url._meta.id}"

}

},

"emit": [

{"to": "unique", "from": "@response", "when": "success"},

{"to": "register", "from": "@response", "when": "success"}

],

"mode": "SINGLE"

}

I didn’t write this. Claude created it based on my description of what I needed. When I said “the registry preset should be optional because an empty registry is valid for the first URL,” Claude immediately understood and added "optional": true.

This is the magic of the partnership: I describe the intent, Claude handles the implementation details. The JSON escaping, the correct property paths like ${url.data.url} vs ${url._meta.id}, the emit conditions – all handled by Claude while I focus on the agentic process logic.

Phase 3: Debugging Together

When I clicked “Fire Once” on the dedupe-check transition, it failed. Instead of diving into logs myself, I asked Claude:

“I clicked fire once on dedupe check but not all presets are there”

Claude immediately investigated, calling the API to check token bindings:

“success”: false,

“ready”: false,

“boundTokens”: {

“url”: [{ “data”: { “url”: “https://…” } }],

“registry”: []

},

“error”: “Not all required presets have available tokens”

}

Claude spotted the issue: the registry place was empty (we had cleared it), and the preset wasn’t marked as optional. Within seconds, Claude created an updated inscription with "optional": true and executed the update. The transition fired successfully.

This is real-time collaborative debugging. I observe the symptoms, Claude investigates the cause, proposes a fix, and implements it – all in a continuous conversation.

Phase 4: Analyzing the Results

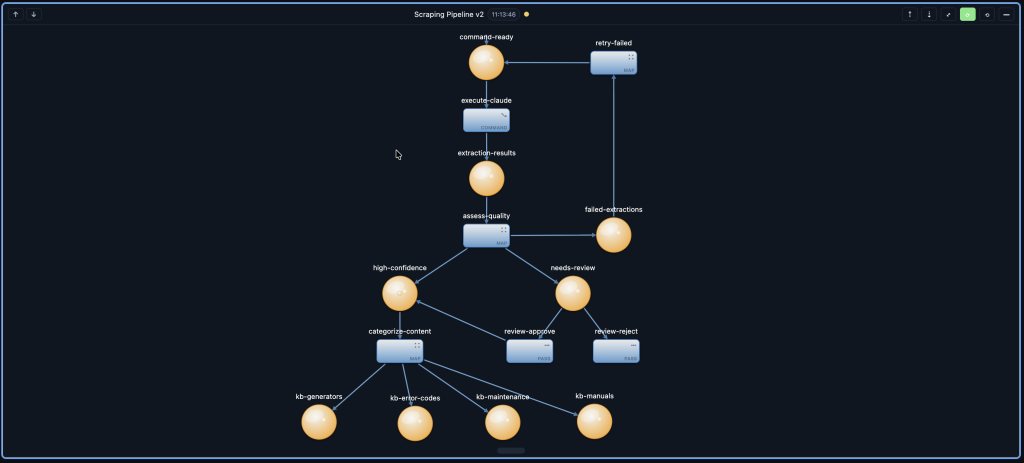

Once the pipeline ran, I asked Claude to show me what ended up in the high-confidence place:

=== high-confidence place ===

1 token containing:

- Source: https://www.smokstak.com/forum/

- Brands discussed: Onan, Kohler, Generac, Sears, Honda, Yamaha

- Categories: Military MEP Gen-Sets, Aircraft Gen-Sets, Portable Generators

- Thread counts: 20,271 Onan, 3,331 Kohler, 1,252 Generac

- Confidence: 0.3 (low - forum structure, not specs database)

- Extraction notes: "Original URL returned 404, forum structure changed"

Claude didn’t just dump JSON – it interpreted the data and presented it in a way I could understand. I could immediately see that while the forum was rich in content, it wasn’t the structured specs database we wanted. This insight helped me refine our URL sourcing strategy.

The Division of Labor

Through building this pipeline, a natural division of labor emerged. It’s not about what AI can do versus what humans must do – it’s about what each party does best.

What Claude Code Handles

1. Creating Inscriptions

Transition inscriptions are complex JSON structures with nested objects, escape sequences, and precise syntax. Claude writes them flawlessly based on natural language descriptions.

2. Token Format Debugging

When data doesn’t flow correctly, Claude investigates token structures, identifies schema mismatches, and explains what format is expected vs. what was received.

3. ArcQL Query Writing

Queries like FROM $ WHERE $.confidence > 0.7 ORDER BY $.timestamp DESC LIMIT 5 require understanding both SQL-like syntax and the token data structure. Claude handles this naturally.

4. API and Configuration Details

Host formats (default@localhost:8080), property paths (${input.data.url} vs ${input._meta.id}), and emit conditions – all the syntactic details that are easy to get wrong.

5. Real-time Investigation

When something fails, Claude calls APIs, reads token data, checks transition states, and reports findings – all without me having to write curl commands or parse JSON manually.

What the Human Controls

1. Agentic Process Architecture

Deciding that we need a dedupe step before extraction, that quality assessment should happen after extraction, that results should route based on confidence – these are design decisions that require understanding the business problem.

2. Observing Token Flow

Watching tokens move through places, noticing when they accumulate unexpectedly, identifying bottlenecks – this visual pattern recognition guides optimization efforts.

3. Data Analysis and Insights

When Claude reports that the extracted data has low confidence, I interpret what that means for our data strategy. Should we find better sources? Adjust our extraction prompts? Create a review agentic process?

4. Structuring into Subnets

As the pipeline grows, I organize related functionality into subnets – a scraping subnet, a quality assessment subnet, a routing subnet. This architectural thinking keeps the system maintainable.

5. Asking the Right Questions

“What’s in high-confidence?” “Why didn’t the transition fire?” “Show me the token format.” The human drives the investigation by knowing what to ask.

Data Analytics with Agentic Nets

Agentic Nets aren’t just for agent orchestration – they’re equally powerful for data analytics. Every token that flows through the net carries structured data that can be queried, analyzed, and visualized.

The Data Is Always Visible

In traditional ETL pipelines, data disappears into databases and requires separate tools to inspect. In Agentic Nets, data lives in places as tokens – always visible, always queryable:

# Ask Claude to analyze extraction results

"Show me confidence distribution across all extractions"

# Claude queries the places and reports:

high-confidence: 8 tokens (avg confidence: 0.82)

- 0.95 (specs database)

- 0.85 x 6 (manufacturer sites)

- 0.78 (retailer with specs)

needs-review: 3 tokens (avg confidence: 0.55)

- 0.65 (forum with some specs)

- 0.50 x 2 (blogs, unstructured)

Both Claude and I can see this data. Claude can run queries and compute statistics. I can observe patterns and make strategic decisions. We both have the data under control.

Structuring Data into Subnets

As we collected more generator specifications, I realized we needed to organize the data. I asked Claude to help me structure a subnet for brand-specific analysis:

┌─────────────────────────────────────────────────────────────────────┐

│ BRAND ANALYSIS SUBNET │

├─────────────────────────────────────────────────────────────────────┤

│ │

│ ┌──────────────┐ ┌─────────────┐ ┌──────────────────┐ │

│ │ all-specs │────▶│ route-brand │────▶│ onan-specs │ │

│ │ (input) │ │ (PASS) │────▶│ kohler-specs │ │

│ └──────────────┘ └─────────────┘────▶│ generac-specs │ │

│ ────▶│ other-specs │ │

│ └──────────────────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────┐ │

│ │ brand-summary │ │

│ │ (LLM) │ │

│ └──────────────────┘ │

│ │ │

│ ▼ │

│ ┌──────────────────┐ │

│ │ brand-reports │ │

│ │ (output) │ │

│ └──────────────────┘ │

│ │

└─────────────────────────────────────────────────────────────────────┘I designed the structure. Claude created the inscriptions for route-brand (a PASS transition with emit conditions based on brand) and brand-summary (an LLM transition that generates per-brand analysis). The subnet became a reusable component for any brand-based analysis we needed.

The Six Building Blocks

Every Agentic Net is built from six transition types. Each represents a different mode of human-AI collaboration:

| Transition | Purpose | Claude’s Role | Human’s Role |

|---|---|---|---|

| PASS | Route tokens by condition | Writes emit conditions | Designs routing logic |

| MAP | Transform data structure | Creates template expressions | Defines target schema |

| HTTP | Call external APIs | Handles auth, retries, errors | Chooses integrations |

| LLM | AI-powered analysis | Optimizes prompts | Guides analysis intent |

| AGENT | Autonomous execution | Executes with tools | Sets goals and constraints |

| COMMAND | System shell execution | Writes safe commands | Approves operations |

PASS and MAP are deterministic – Claude writes the logic, I verify it. HTTP and COMMAND involve Claude handling integration complexity while I control what systems we connect to. LLM and AGENT are the frontier – where Claude becomes an active participant in the agentic process, and I guide its behavior through goals and constraints.

Why This Changes Everything

Traditional development treats AI as a code generator – you describe what you want, it produces code, you integrate and debug alone. Agentic Nets flip this relationship.

| Traditional AI Assistance | Agentic Net Partnership |

|---|---|

| AI generates code on request | AI participates from design to debugging |

| Human debugs alone | AI investigates, explains, fixes in real-time |

| Data hidden in databases | Data visible as tokens, queryable by both |

| Context lost between sessions | Agentic Process state persists, AI can resume |

| AI doesn’t see runtime behavior | AI monitors token flow and transitions |

The Ultrathinking Advantage

When you work with Claude on Agentic Nets, you enter a state I call ultrathinking – a sustained, deep collaboration that combines:

- Human intuition: Understanding business requirements, recognizing patterns, making strategic decisions

- AI precision: Perfect syntax, instant API calls, flawless JSON, tireless investigation

- Shared visibility: Both see the same agentic process, the same tokens, the same results

- Rapid iteration: Changes take seconds, not hours

- Complementary strengths: Each party does what they do best

A single developer in ultrathinking mode with Claude can accomplish what previously required an entire team – not by working harder, but by working together in a fundamentally more effective way.

Getting Started

Ready to experience human-AI partnership? Here’s how to build your first Agentic Net with Claude:

Step 1: Describe Your Goal

Start conversationally. Don’t write specifications – have a discussion:

“I want to build an agentic process that monitors customer feedback, classifies sentiment, and routes complaints to the right team while tracking resolution metrics.”

Step 2: Let Claude Create the Structure

Claude will propose places (feedback-queue, classified, complaints, resolved) and transitions (classify-sentiment, route-complaint, track-resolution). You refine the design together.

Step 3: Build Incrementally

Create one transition at a time. Test it. Ask Claude to show you what tokens look like. Adjust and continue.

Step 4: Observe and Analyze

Once tokens flow, ask Claude to analyze patterns. “What’s the average resolution time?” “Which complaint types take longest?” The data is there – you just need to ask.

The Future Is Collaborative

We stand at an inflection point. The tools we use are becoming partners. The assistants we query are becoming collaborators. The agentic processes we build are becoming shared artifacts that both human and AI can understand, modify, and improve.

Agentic Nets represent this future – a framework where human creativity and AI capability merge into something greater than either alone. Claude handles the complexity that used to slow us down: the JSON syntax, the API configurations, the debugging investigations. We focus on what matters: the architecture, the insights, the decisions.

The scraping pipeline I built with Claude took a few hours. The insights it continues to generate – about generator specifications, about data quality, about source reliability – will inform our work for months. And every time I return to it, Claude is there, ready to investigate, explain, and improve.

Welcome to the age of Agentic Nets. Welcome to the future of human-AI partnership.

Explore More

- Building Blocks Overview – Deep dive into the six transition types

- PASS Transitions – Token routing fundamentals

- MAP Transitions – Data transformation patterns

- HTTP Transitions – External API integration

- LLM Transitions – AI-powered analysis

- AGENT Transitions – Autonomous execution

- COMMAND Transitions – System operations

- CI/CD Pipeline Example – Real-world implementation